Scientific Computing

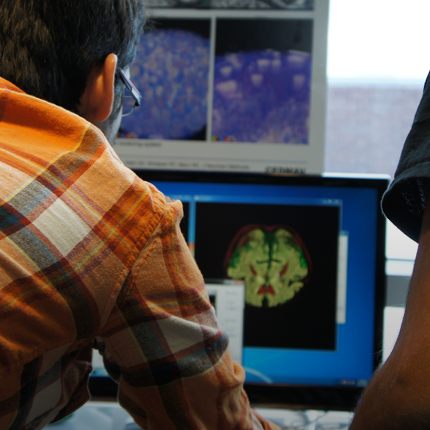

Numerical simulation of real-world phenomena provides fertile ground for building interdisciplinary relationships. The SCI Institute has a long tradition of building these relationships in a win-win fashion – a win for the theoretical and algorithmic development of numerical modeling and simulation techniques and a win for the discipline-specific science of interest. High-order and adaptive methods, uncertainty quantification, complexity analysis, and parallelization are just some of the topics being investigated by SCI faculty. These areas of computing are being applied to a wide variety of engineering applications ranging from fluid mechanics and solid mechanics to bioelectricity.

Valerio Pascucci

Scientific Data Management

Chris Johnson

Problem Solving Environments

Ross Whitaker

GPUs

Chuck Hansen

GPUsFunded Research Projects:

Publications in Scientific Computing:

Robust topology optimization with low rank approximation using artificial neural networks, V. Keshavarzzadeh, R. M. Kirby, A. Narayan. In Computational Mechanics, 2021. DOI: 10.1007/s00466-021-02069-3 We present a low rank approximation approach for topology optimization of parametrized linear elastic structures. The parametrization is considered on loading and stiffness of the structure. The low rank approximation is achieved by identifying a parametric connection among coarse finite element models of the structure (associated with different design iterates) and is used to inform the high fidelity finite element analysis. We build an Artificial Neural Network (ANN) map between low resolution design iterates and their corresponding interpolative coefficients (obtained from low rank approximations) and use this surrogate to perform high resolution parametric topology optimization. We demonstrate our approach on robust topology optimization with compliance constraints/objective functions and develop error bounds for the the parametric compliance computations. We verify these parametric computations with more challenging quantities of interest such as the p-norm of von Mises stress. To conclude, we use our approach on a 3D robust topology optimization and show significant reduction in computational cost via quantitative measures. |

Facilitating Data Discovery for Large-scale Science Facilities using Knowledge Networks Y. Qin, I. Rodero, M. Parashar. In 2021 IEEE International Parallel and Distributed Processing Symposium (IPDPS), IEEE, pp. 651-660. 2021. DOI: 10.1109/IPDPS49936.2021.00073 Large-scale multiuser scientific facilities, such as geographically distributed observatories, remote instruments, and experimental platforms, represent some of the largest national investments and can enable dramatic advances across many areas of science. Recent examples of such advances include the detection of gravitational waves and the imaging of a black hole’s event horizon. However, as the number of such facilities and their users grow, along with the complexity, diversity, and volumes of their data products, finding and accessing relevant data is becoming increasingly challenging, limiting the potential impact of facilities. These challenges are further amplified as scientists and application workflows increasingly try to integrate facilities’ data from diverse domains. In this paper, we leverage concepts underlying recommender systems, which are extremely effective in e-commerce, to address these data-discovery and data-access challenges for large-scale distributed scientific facilities. We first analyze data from facilities and identify and model user-query patterns in terms of facility location and spatial localities, domain-specific data models, and user associations. We then use this analysis to generate a knowledge graph and develop the collaborative knowledge-aware graph attention network (CKAT) recommendation model, which leverages graph neural networks (GNNs) to explicitly encode the collaborative signals through propagation and combine them with knowledge associations. Moreover, we integrate a knowledge-aware neural attention mechanism to enable the CKAT to pay more attention to key information while reducing irrelevant noise, thereby increasing the accuracy of the recommendations. We apply the proposed model on two real-world facility datasets and empirically demonstrate that the CKAT can effectively facilitate data discovery, significantly outperforming several compelling state-of-the-art baseline models. |

Facilitating Staging-based Unstructured Mesh Processing to Support Hybrid In-Situ Workflows Z. Wang, P. Subedi, M. Dorier, P.E. Davis, M. Parashar. In 2021 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), pp. 960-964. 2021. DOI: 10.1109/IPDPSW52791.2021.00152 In-situ and in-transit processing alleviate the gap between the computing and I/O capabilities by scheduling data analytics close to the data source. Hybrid in-situ processing splits data analytics into two stages: the data processing that runs in-situ aims to extract regions of interest, which are then transferred to staging services for further in-transit analytics. To facilitate this type of hybrid in-situ processing, the data staging service needs to support complex intermediate data representations generated/consumed by the in-situ tasks. Unstructured (or irregular) mesh is one such derived data representation that is typically used and bridges simulation data and analytics. However, how staging services efficiently support unstructured mesh transfer and processing remains to be explored. This paper investigates design options for transferring and processing unstructured mesh data using staging services. Using polygonal mesh data as an example, we show that hybrid in-situ workflows with staging-based unstructured mesh processing can effectively support hybrid in-situ workflows, and can significantly decrease data movement overheads. |

Investigating In Situ Reduction via Lagrangian Representations for Cosmology and Seismology Applications, S. Sane, C. R. Johnson, H. Childs. In Computational Science -- ICCS 2021, Springer International Publishing, pp. 436--450. 2021. DOI: 10.1007/978-3-030-77961-0_36 Although many types of computational simulations produce time-varying vector fields, subsequent analysis is often limited to single time slices due to excessive costs. Fortunately, a new approach using a Lagrangian representation can enable time-varying vector field analysis while mitigating these costs. With this approach, a Lagrangian representation is calculated while the simulation code is running, and the result is explored after the simulation. Importantly, the effectiveness of this approach varies based on the nature of the vector field, requiring in-depth investigation for each application area. With this study, we evaluate the effectiveness for previously unexplored cosmology and seismology applications. We do this by considering encumbrance (on the simulation) and accuracy (of the reconstructed result). To inform encumbrance, we integrated in situ infrastructure with two simulation codes, and evaluated on representative HPC environments, performing Lagrangian in situ reduction using GPUs as well as CPUs. To inform accuracy, our study conducted a statistical analysis across a range of spatiotemporal configurations as well as a qualitative evaluation. In all, we demonstrate effectiveness for both cosmology and seismology—time-varying vector fields from these domains can be reduced to less than 1% of the total data via Lagrangian representations, while maintaining accurate reconstruction and requiring under 10% of total execution time in over 80% of our experiments. |

Leveraging user access patterns and advanced cyberinfrastructure to accelerate data delivery from shared-use scientific observatories Y. Qin, I. Rodero, A. Simonet, C. Meertens, D. Reiner, J. Riley, M. Parashar. In Future Generation Computer Systems, North-Holland, pp. 14-27. 2021. DOI: https://doi.org/10.1016/j.future.2021.03.004 With the growing number and increasing availability of shared-use instruments and observatories, observational data is becoming an essential part of application workflows and contributor to scientific discoveries in a range of disciplines. However, the corresponding growth in the number of users accessing these facilities coupled with the expansion in the scale and variety of the data, is making it challenging for these facilities to ensure their data can be accessed, integrated, and analyzed in a timely manner, and is resulting significant demands on their cyberinfrastructure (CI). In this paper, we present the design of a push-based data delivery framework that leverages emerging in-network capabilities, along with data pre-fetching techniques based on a hybrid data management model. Specifically, we analyze data access traces for two large-scale observatories, Ocean Observatories Initiative (OOI) and Geodetic Facility for the Advancement of Geoscience (GAGE), to identify typical user access patterns and to develop a model that can be used for data pre-fetching. Furthermore, we evaluate our data pre-fetching model and the proposed framework using a simulation of the Virtual Data Collaboratory (VDC) platform that provides in-network data staging and processing capabilities. The results demonstrate that the ability of the framework to significantly improve data delivery performance and reduce network traffic at the observatories’ facilities. |

Kernel optimization for Low-Rank Multi-Fidelity Algorithms, M. Razi, M. Kirby, A. Narayan. In International Journal for Uncertainty Quantification, Begel House Inc., pp. 31-54. 2021. One of the major challenges for low-rank multi-fidelity (MF) approaches is the assumption that low-fidelity (LF) and high-fidelity (HF) models admit``similar''low-rank kernel representations. Low-rank MF methods have traditionally attempted to exploit low-rank representations of\emph linear kernels. However, such linear kernels may not be able to capture low-rank behavior, and they may admit LF and HF kernels that are not similar. Such a situation renders a naive approach to low-rank MF procedures ineffective. In this paper, we propose a novel approach for the selection of a near-optimal kernel function for use in low-rank MF methods. The proposed framework is a two-step strategy wherein:(1) hyperparameters of a library of kernel functions are optimized, and (2) a particular combination of of the optimized kernels is selected, through either a convex mixture (Additive Kernel Approach) or through a data-driven … |