Visualization

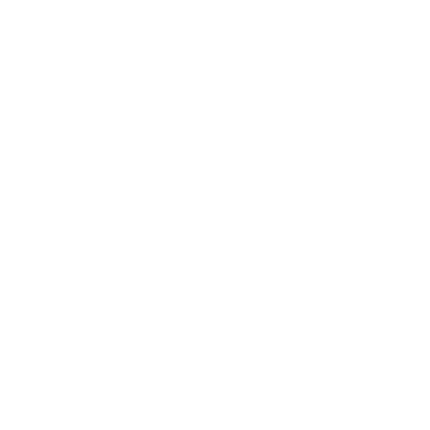

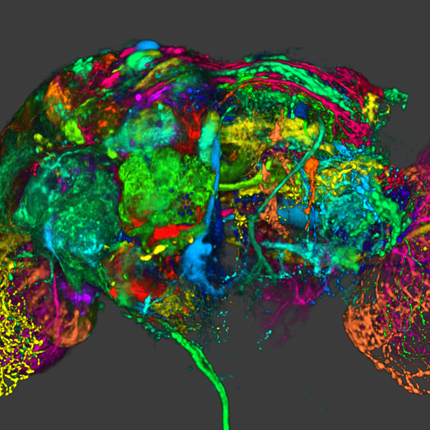

Visualization, sometimes referred to as visual data analysis, uses the graphical representation of data as a means of gaining understanding and insight into the data. Visualization research at SCI has focused on applications spanning computational fluid dynamics, medical imaging and analysis, biomedical data analysis, healthcare data analysis, weather data analysis, poetry, network and graph analysis, financial data analysis, etc.Research involves novel algorithm and technique development to building tools and systems that assist in the comprehension of massive amounts of (scientific) data. We also research the process of creating successful visualizations.

We strongly believe in the role of interactivity in visual data analysis. Therefore, much of our research is concerned with creating visualizations that are intuitive to interact with and also render at interactive rates.

Visualization at SCI includes the academic subfields of Scientific Visualization, Information Visualization and Visual Analytics.

Mike Kirby

Uncertainty Visualization

Alex Lex

Information VisualizationCenters and Labs:

- Visualization Design Lab (VDL)

- CEDMAV

- POWDER Display Wall

- Modeling, Display, and Understanding Uncertainty in Simulations for Policy Decision Making

- Topological Data Analysis for Large Network Visualization

Funded Research Projects:

Publications in Visualization:

Adaptive Spatially Aware I/O for Multiresolution Particle Data Layouts W. Usher, X. Huang, S. Petruzza, S. Kumar, S. R. Slattery, S. T. Reeve, F. Wang, C. R. Johnson,, V. Pascucci. In IPDPS, 2021. |

HyperLabels---Browsing of Dense and Hierarchical Molecular 3D Models D Kouřil, T Isenberg, B Kozlíková, M Meyer, E Gröller, I Viola. In IEEE transactions on visualization and computer graphics, IEEE, 2021. DOI: 10.1109/TVCG.2020.2975583 We present a method for the browsing of hierarchical 3D models in which we combine the typical navigation of hierarchical structures in a 2D environment---using clicks on nodes, links, or icons---with a 3D spatial data visualization. Our approach is motivated by large molecular models, for which the traditional single-scale navigational metaphors are not suitable. Multi-scale phenomena, e. g., in astronomy or geography, are complex to navigate due to their large data spaces and multi-level organization. Models from structural biology are in addition also densely crowded in space and scale. Cutaways are needed to show individual model subparts. The camera has to support exploration on the level of a whole virus, as well as on the level of a small molecule. We address these challenges by employing HyperLabels: active labels that---in addition to their annotational role---also support user interaction. Clicks on HyperLabels select the next structure to be explored. Then, we adjust the visualization to showcase the inner composition of the selected subpart and enable further exploration. Finally, we use a breadcrumbs panel for orientation and as a mechanism to traverse upwards in the model hierarchy. We demonstrate our concept of hierarchical 3D model browsing using two exemplary models from meso-scale biology. |

reVISit: Looking Under the Hood of Interactive Visualization Studies C. Nobre, D. Wootton, Z. T. Cutler, L. Harrison, H. Pfister, A. Lex. In SIGCHI Conference on Human Factors in Computing Systems (CHI), ACM, pp. 1--12. 2021. DOI: 10.31219/osf.io/cbw36 Quantifying user performance with metrics such as time and accuracy does not show the whole picture when researchers evaluate complex, interactive visualization tools. In such systems, performance is often influenced by different analysis strategies that statistical analysis methods cannot account for. To remedy this lack of nuance, we propose a novel analysis methodology for evaluating complex interactive visualizations at scale. We implement our analysis methods in reVISit, which enables analysts to explore participant interaction performance metrics and responses in the context of users' analysis strategies. Replays of participant sessions can aid in identifying usability problems during pilot studies and make individual analysis processes salient. To demonstrate the applicability of reVISit to visualization studies, we analyze participant data from two published crowdsourced studies. Our findings show that reVISit can be used to reveal and describe novel interaction patterns, to analyze performance differences between different analysis strategies, and to validate or challenge design decisions. |

Lessons learned towards the immediate delivery of massive aerial imagery to farmers and crop consultants A. A. Gooch, S. Petruzza, A. Gyulassy, G. Scorzelli, V. Pascucci, L. Rantham, W. Adcock, C. Coopmans. In Autonomous Air and Ground Sensing Systems for Agricultural Optimization and Phenotyping VI, Vol. 11747, International Society for Optics and Photonics, pp. 22 -- 34. 2021. DOI: 10.1117/12.2587694 In this paper, we document lessons learned from using ViSOAR Ag Explorer™ in the fields of Arkansas and Utah in the 2018-2020 growing seasons. Our insights come from creating software with fast reading and writing of 2D aerial image mosaics for platform-agnostic collaborative analytics and visualization. We currently enable stitching in the field on a laptop without the need for an internet connection. The full resolution result is then available for instant streaming visualization and analytics via Python scripting. While our software, ViSOAR Ag Explorer™ removes the time and labor software bottleneck in processing large aerial surveys, enabling a cost-effective process to deliver actionable information to farmers, we learned valuable lessons with regard to the acquisition, storage, viewing, analysis, and planning stages of aerial data surveys. Additionally, with the ultimate goal of stitching thousands of images in minutes on board a UAV at the time of data capture, we performed preliminary tests for on-board, real-time stitching and analysis on USU AggieAir sUAS using lightweight computational resources. This system is able to create a 2D map while flying and allow interactive exploration of the full resolution data as soon as the platform has landed or has access to a network. This capability further speeds up the assessment process on the field and opens opportunities for new real-time photogrammetry applications. Flying and imaging over 1500-2000 acres per week provides up-to-date maps that give crop consultants a much broader scope of the field in general as well as providing a better view into planting and field preparation than could be observed from field level. Ultimately, our software and hardware could provide a much better understanding of weed presence and intensity or lack thereof. |

Data-Driven Space-Filling Curves L. Zhou, C. R. Johnson, D. Weiskopf. In IEEE Transactions on Visualization and Computer Graphics, Vol. 27, No. 2, IEEE, pp. 1591-1600. 2021. DOI: 10.1109/TVCG.2020.3030473 We propose a data-driven space-filling curve method for 2D and 3D visualization. Our flexible curve traverses the data elements in the spatial domain in a way that the resulting linearization better preserves features in space compared to existing methods. We achieve such data coherency by calculating a Hamiltonian path that approximately minimizes an objective function that describes the similarity of data values and location coherency in a neighborhood. Our extended variant even supports multiscale data via quadtrees and octrees. Our method is useful in many areas of visualization, including multivariate or comparative visualization,ensemble visualization of 2D and 3D data on regular grids, or multiscale visual analysis of particle simulations. The effectiveness of our method is evaluated with numerical comparisons to existing techniques and through examples of ensemble and multivariate datasets. |

A virtual frame buffer abstraction for parallel rendering of large tiled display walls M. Han, I. Wald, W. Usher, N. Morrical, A. Knoll, V. Pascucci, C.R. Johnson. In 2020 IEEE Visualization Conference (VIS), pp. 11--15. 2020. DOI: 10.1109/VIS47514.2020.00009 We present dw2, a flexible and easy-to-use software infrastructure for interactive rendering of large tiled display walls. Our library represents the tiled display wall as a single virtual screen through a display "service", which renderers connect to and send image tiles to be displayed, either from an on-site or remote cluster. The display service can be easily configured to support a range of typical network and display hardware configurations; the client library provides a straightforward interface for easy integration into existing renderers. We evaluate the performance of our display wall service in different configurations using a CPU and GPU ray tracer, in both on-site and remote rendering scenarios using multiple display walls. |

Uncertainty Visualization of 2D Morse Complex Ensembles using Statistical Summary Maps T. M. Athawale, D. Maljovec, L. Yan, C. R. Johnson, V. Pascucci,, B. Wang. In IEEE Transactions on Visualization and Computer Graphics, 2020. DOI: 10.1109/TVCG.2020.3022359 Morse complexes are gradient-based topological descriptors with close connections to Morse theory. They are widely applicable in scientific visualization as they serve as important abstractions for gaining insights into the topology of scalar fields. Noise inherent to scalar field data due to acquisitions and processing, however, limits our understanding of the Morse complexes as structural abstractions. We, therefore, explore uncertainty visualization of an ensemble of 2D Morse complexes that arise from scalar fields coupled with data uncertainty. We propose statistical summary maps as new entities for capturing structural variations and visualizing positional uncertainties of Morse complexes in ensembles. Specifically, we introduce two types of statistical summary maps -- the Probabilistic Map and the Survival Map -- to characterize the uncertain behaviors of local extrema and local gradient flows, respectively. We demonstrate the utility of our proposed approach using synthetic and real-world datasets. |

High-Quality Rendering of Glyphs Using Hardware-Accelerated Ray Tracing S. Zellmann, M. Aumüller, N. Marshak, I. Wald. In Eurographics Symposium on Parallel Graphics and Visualization (EGPGV), The Eurographics Association, 2020. DOI: 10.2312/pgv.20201076 Glyph rendering is an important scientific visualization technique for 3D, time-varying simulation data and for higherdimensional data in general. Though conceptually simple, there are several different challenges when realizing glyph rendering on top of triangle rasterization APIs, such as possibly prohibitive polygon counts, limitations of what shapes can be used for the glyphs, issues with visual clutter, etc. In this paper, we investigate the use of hardware ray tracing for high-quality, highperformance glyph rendering, and show that this not only leads to a more flexible and often more elegant solution for dealing with number and shape of glyphs, but that this can also help address visual clutter, and even provide additional visual cues that can enhance understanding of the dataset. |

A Terminology for In Situ Visualization and Analysis Systems H. Childs, S. D. Ahern, J. Ahrens, A. C. Bauer, J. Bennett, E. W. Bethel, P. Bremer, E. Brugger, J. Cottam, M. Dorier, S. Dutta, J. M. Favre, T. Fogal, S. Frey, C. Garth, B. Geveci, W. F. Godoy, C. D. Hansen, C. Harrison, B. Hentschel, J. Insley, C. R. Johnson, S. Klasky, A. Knoll, J. Kress, M. Larsen, J. Lofstead, K. Ma, P. Malakar, J. Meredith, K. Moreland, P. Navratil, P. O’Leary, M. Parashar, V. Pascucci, J. Patchett, T. Peterka, S. Petruzza, N. Podhorszki, D. Pugmire, M. Rasquin, S. Rizzi, D. H. Rogers, S. Sane, F. Sauer, R. Sisneros, H. Shen, W. Usher, R. Vickery, V. Vishwanath, I. Wald, R. Wang, G. H. Weber, B. Whitlock, M. Wolf, H. Yu, S. B. Ziegeler. In International Journal of High Performance Computing Applications, Vol. 34, No. 6, pp. 676–691. 2020. DOI: 10.1177/1094342020935991 The term “in situ processing” has evolved over the last decade to mean both a specific strategy for visualizing and analyzing data and an umbrella term for a processing paradigm. The resulting confusion makes it difficult for visualization and analysis scientists to communicate with each other and with their stakeholders. To address this problem, a group of over fifty experts convened with the goal of standardizing terminology. This paper summarizes their findings and proposes a new terminology for describing in situ systems. An important finding from this group was that in situ systems are best described via multiple, distinct axes: integration type, proximity, access, division of execution, operation controls, and output type. This paper discusses these axes, evaluates existing systems within the axes, and explores how currently used terms relate to the axes. |

Remembering Bill Lorensen: The Man, the Myth, and Marching Cubes C. R. Johnson, T. Kapur, W. Schroeder,, T. Yoo. In IEEE Computer Graphics and Applications, Vol. 40, No. 2, pp. 112-118. March, 2020. DOI: 10.1109/MCG.2020.2971168 |

Interactive Rendering of Large-Scale Volumes on Multi-Core CPUs F. Wang, I. Wald,, C.R. Johnson. In 2019 IEEE 9th Symposium on Large Data Analysis and Visualization (LDAV), pp. 27--36. 2019. DOI: 10.1109/LDAV48142.2019.8944267 Recent advances in large-scale simulations have resulted in volume data of increasing size that stress the capabilities of off-the-shelf visualization tools. Users suffer from long data loading times, because large data must be read from disk into memory prior to rendering the first frame. In this work, we present a volume renderer that enables high-fidelity interactive visualization of large volumes on multi-core CPU architectures. Compared to existing CPU-based visualization frameworks, which take minutes or hours for data loading, our renderer allows users to get a data overview in seconds. Using a hierarchical representation of raw volumes and ray-guided streaming, we reduce the data loading time dramatically and improve the user's interactivity experience. We also examine system design choices with respect to performance and scalability. Specifically, we evaluate the hierarchy generation time, which has been ignored in most prior work, but which can become a significant bottleneck as data scales. Finally, we create a module on top of the OSPRay ray tracing framework that is ready to be integrated into general-purpose visualization frameworks such as Paraview. |