Image Analysis

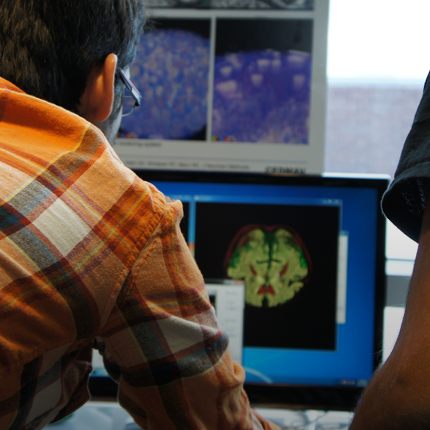

SCI's imaging work addresses fundamental questions in 2D and 3D image processing, including filtering, segmentation, surface reconstruction, and shape analysis. In low-level image processing, this effort has produce new nonparametric methods for modeling image statistics, which have resulted in better algorithms for denoising and reconstruction. Work with particle systems has led to new methods for visualizing and analyzing 3D surfaces. Our work in image processing also includes applications of advanced computing to 3D images, which has resulted in new parallel algorithms and real-time implementations on graphics processing units (GPUs). Application areas include medical image analysis, biological image processing, defense, environmental monitoring, and oil and gas.

Ross Whitaker

Segmentation

Chris Johnson

Diffusion Tensor AnalysisFunded Research Projects:

Publications in Image Analysis:

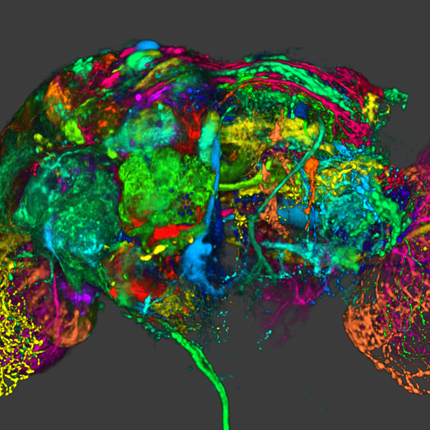

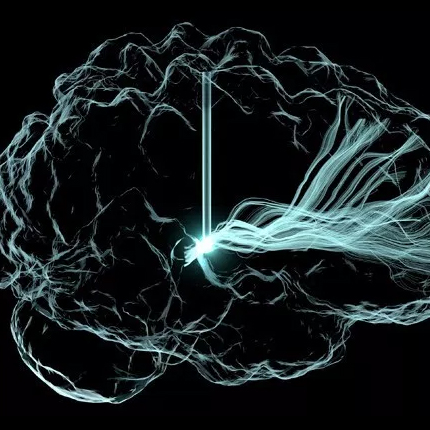

Modeling the Shape of the Brain Connectome via Deep Neural Networks, H. Dai, M. Bauer, P.T. Fletcher, S. Joshi. In Information Processing in Medical Imaging, Springer Nature Switzerland, pp. 291--302. 2023. ISBN: 978-3-031-34048-2 The goal of diffusion-weighted magnetic resonance imaging (DWI) is to infer the structural connectivity of an individual subject's brain in vivo. To statistically study the variability and differences between normal and abnormal brain connectomes, a mathematical model of the neural connections is required. In this paper, we represent the brain connectome as a Riemannian manifold, which allows us to model neural connections as geodesics. This leads to the challenging problem of estimating a Riemannian metric that is compatible with the DWI data, i.e., a metric such that the geodesic curves represent individual fiber tracts of the connectomics. We reduce this problem to that of solving a highly nonlinear set of partial differential equations (PDEs) and study the applicability of convolutional encoder-decoder neural networks (CEDNNs) for solving this geometrically motivated PDE. Our method achieves excellent performance in the alignment of geodesics with white matter pathways and tackles a long-standing issue in previous geodesic tractography methods: the inability to recover crossing fibers with high fidelity. Code is available at https://github.com/aarentai/Metric-Cnn-3D-IPMI. |

Multi-level multi-domain statistical shape model of the subtalar, talonavicular, and calcaneocuboid joints A.C. Peterson, R.J. Lisonbee, N. Krähenbühl, C.L. Saltzman, A. Barg, N. Khan, S. Elhabian, A.L. Lenz. In Frontiers in Bioengineering and Biotechnology, 2022. DOI: 10.3389/fbioe.2022.1056536 Traditionally, two-dimensional conventional radiographs have been the primary tool to measure the complex morphology of the foot and ankle. However, the subtalar, talonavicular, and calcaneocuboid joints are challenging to assess due to their bone morphology and locations within the ankle. Weightbearing computed tomography is a novel high-resolution volumetric imaging mechanism that allows detailed generation of 3D bone reconstructions. This study aimed to develop a multi-domain statistical shape model to assess morphologic and alignment variation of the subtalar, talonavicular, and calcaneocuboid joints across an asymptomatic population and calculate 3D joint measurements in a consistent weightbearing position. Specific joint measurements included joint space distance, congruence, and coverage. Noteworthy anatomical variation predominantly included the talus and calcaneus, specifically an inverse relationship regarding talar dome heightening and calcaneal shortening. While there was minimal navicular and cuboid shape variation, there were alignment variations within these joints; the most notable is the rotational aspect about the anterior-posterior axis. This study also found that multi-domain modeling may be able to predict joint space distance measurements within a population. Additionally, variation across a population of these four bones may be driven far more by morphology than by alignment variation based on all three joint measurements. These data are beneficial in furthering our understanding of joint-level morphology and alignment variants to guide advancements in ankle joint pathological care and operative treatments. |

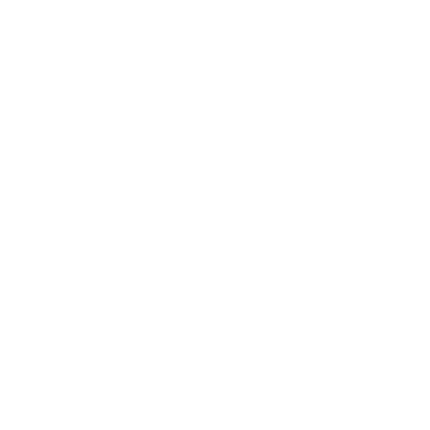

High-Quality Progressive Alignment of Large 3D Microscopy Data A. Venkat, D. Hoang, A. Gyulassy, P.T. Bremer, F. Federer, V. Pascucci. In 2022 IEEE 12th Symposium on Large Data Analysis and Visualization (LDAV), pp. 1--10. 2022. DOI: 10.1109/LDAV57265.2022.9966406 Large-scale three-dimensional (3D) microscopy acquisitions fre-quently create terabytes of image data at high resolution and magni-fication. Imaging large specimens at high magnifications requires acquiring 3D overlapping image stacks as tiles arranged on a two-dimensional (2D) grid that must subsequently be aligned and fused into a single 3D volume. Due to their sheer size, aligning many overlapping gigabyte-sized 3D tiles in parallel and at full resolution is memory intensive and often I/O bound. Current techniques trade accuracy for scalability, perform alignment on subsampled images, and require additional postprocess algorithms to refine the alignment quality, usually with high computational requirements. One common solution to the memory problem is to subdivide the overlap region into smaller chunks (sub-blocks) and align the sub-block pairs in parallel, choosing the pair with the most reliable alignment to determine the global transformation. Yet aligning all sub-block pairs at full resolution remains computationally expensive. The key to quickly developing a fast, high-quality, low-memory solution is to identify a single or a small set of sub-blocks that give good alignment at full resolution without touching all the overlapping data. In this paper, we present a new iterative approach that leverages coarse resolution alignments to progressively refine and align only the promising candidates at finer resolutions, thereby aligning only a small user-defined number of sub-blocks at full resolution to determine the lowest error transformation between pairwise overlapping tiles. Our progressive approach is 2.6x faster than the state of the art, requires less than 450MB of peak RAM (per parallel thread), and offers a higher quality alignment without the need for additional postprocessing refinement steps to correct for alignment errors. |

430 Training neural networks to identify built environment features for pedestrian safety, A. Quistberg, C.I. Gonzalez, P. Arbeláez, O.L. Sarmiento, L. Baldovino-Chiquillo, Q. Nguyen, T. Tasdizen, L.A.G. Garcia, D. Hidalgo, S.J. Mooney, A.V.D. Roux, G. Lovasi. In Injury Prevention, Vol. 28, No. 2, BMJ, pp. A65. 2022. DOI: 10.1136/injuryprev-2022-safety2022.194 Background |

Theory-guided physics-informed neural networks for boundary layer problems with singular perturbation A. Arzani, K.W. Cassel, R.M. D'Souza. In Journal of Computational Physics, 2022. DOI: https://doi.org/10.1016/j.jcp.2022.111768 Physics-informed neural networks (PINNs) are a recent trend in scientific machine learning research and modeling of differential equations. Despite progress in PINN research, large gradients and highly nonlinear patterns remain challenging to model. Thin boundary layer problems are prominent examples of large gradients that commonly arise in transport problems. In this study, boundary-layer PINN (BL-PINN) is proposed to enable a solution to thin boundary layers by considering them as a singular perturbation problem. Inspired by the classical perturbation theory and asymptotic expansions, BL-PINN is designed to replicate the procedure in singular perturbation theory. Namely, different parallel PINN networks are defined to represent different orders of approximation to the boundary layer problem in the inner and outer regions. In different benchmark problems (forward and inverse), BL-PINN shows superior performance compared to the traditional PINN approach and is able to produce accurate results, whereas the classical PINN approach could not provide meaningful solutions. BL-PINN also demonstrates significantly better results compared to other extensions of PINN such as the extended PINN (XPINN) approach. The natural incorporation of the perturbation parameter in BL-PINN provides the opportunity to evaluate parametric solutions without the need for retraining. BL-PINN demonstrates an example of how classical mathematical theory could be used to guide the design of deep neural networks for solving challenging problems. |

A Pathologist-Informed Workflow for Classification of Prostate Glands in Histopathology, A. Ferrero, B. Knudsen, D. Sirohi, R. Whitaker. In Medical Optical Imaging and Virtual Microscopy Image Analysis, Springer Nature Switzerland, pp. 53--62. 2022. DOI: 10.1007/978-3-031-16961-8_6 Pathologists diagnose and grade prostate cancer by examining tissue from needle biopsies on glass slides. The cancer's severity and risk of metastasis are determined by the Gleason grade, a score based on the organization and morphology of prostate cancer glands. For diagnostic work-up, pathologists first locate glands in the whole biopsy core, and---if they detect cancer---they assign a Gleason grade. This time-consuming process is subject to errors and significant inter-observer variability, despite strict diagnostic criteria. This paper proposes an automated workflow that follows pathologists' modus operandi, isolating and classifying multi-scale patches of individual glands in whole slide images (WSI) of biopsy tissues using distinct steps: (1) two fully convolutional networks segment epithelium versus stroma and gland boundaries, respectively; (2) a classifier network separates benign from cancer glands at high magnification; and (3) an additional classifier predicts the grade of each cancer gland at low magnification. Altogether, this process provides a gland-specific approach for prostate cancer grading that we compare against other machine-learning-based grading methods. |

Few-Shot Segmentation of Microscopy Images Using Gaussian Process, S. Saha, O, Choi, R. Whitaker. In Medical Optical Imaging and Virtual Microscopy Image Analysis, Springer Nature Switzerland, pp. 94--104. 2022. DOI: 10.1007/978-3-031-16961-8_10 Few-shot segmentation has received recent attention because of its promise to segment images containing novel classes based on a handful of annotated examples. Few-shot-based machine learning methods build generic and adaptable models that can quickly learn new tasks. This approach finds potential application in many scenarios that do not benefit from large repositories of labeled data, which strongly impacts the performance of the existing data-driven deep-learning algorithms. This paper presents a few-shot segmentation method for microscopy images that combines a neural-network architecture with a Gaussian-process (GP) regression. The GP regression is used in the latent space of an autoencoder-based segmentation model to learn the distribution of functions from the encoded image representations to the corresponding representation of the segmentation masks in the support set. This regression analysis serves as the prior for predicting the segmentation mask for the query image. The rich latent representation built by the GP using examples in the support set significantly impacts the performance of the segmentation model, demonstrated by extensive experimental evaluation. |

Discrete-Time Observations of Brownian Motion on Lie Groups and Homogeneous Spaces: Sampling and Metric Estimation M.H. Jensen, S. Joshi, S. Sommer. In Algorithms, Vol. 15, No. 8, 2022. ISSN: 1999-4893 DOI: 10.3390/a15080290 We present schemes for simulating Brownian bridges on complete and connected Lie groups and homogeneous spaces. We use this to construct an estimation scheme for recovering an unknown left- or right-invariant Riemannian metric on the Lie group from samples. We subsequently show how pushing forward the distributions generated by Brownian motions on the group results in distributions on homogeneous spaces that exhibit a non-trivial covariance structure. The pushforward measure gives rise to new non-parametric families of distributions on commonly occurring spaces such as spheres and symmetric positive tensors. We extend the estimation scheme to fit these distributions to homogeneous space-valued data. We demonstrate both the simulation schemes and estimation procedures on Lie groups and homogenous spaces, including SPD(3)=GL+(3)/SO(3) and S2=SO(3)/SO(2). |