SCI Publications

2010

A. Chaturvedi, C.R. Butson, S.F. Lempka, S.E. Cooper, C.C. McIntyre.

“Patient-specific models of deep brain stimulation: influence of field model complexity on neural activation predictions,” In Brain Stimulation, Vol. 3, No. 2, Elsevier Inc., pp. 65--67. April, 2010.

ISSN: 1935-861X

DOI: 10.1016/j.brs.2010.01.003

PubMed ID: 20607090

Keywords: Action Potentials, Action Potentials: physiology, Computer Simulation, Deep Brain Stimulation, Deep Brain Stimulation: instrumentation, Deep Brain Stimulation: methods, Humans, Male, Middle Aged, Models, Neurological, Parkinson Disease, Parkinson Disease: therapy, Subthalamic Nucleus, Subthalamic Nucleus: physiology

2009

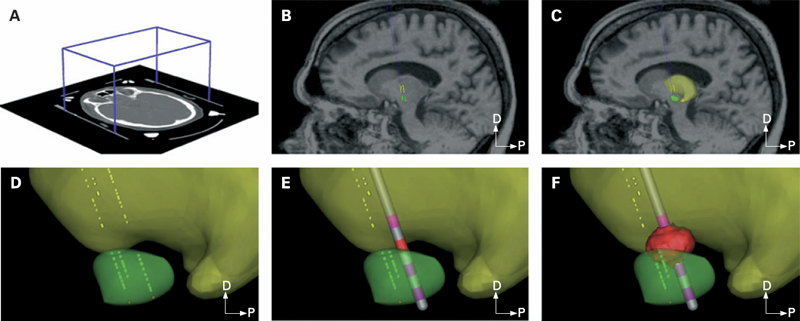

C.B. Maks, C.R. Butson, B.L. Walter, J.L. Vitek, C.C. McIntyre.

“Deep brain stimulation activation volumes and their association with neurophysiological mapping and therapeutic outcomes,” In Journal of Neurology, Neurosurgery, and Psychiatry, Vol. 80, No. 6, pp. 659--666. June, 2009.

ISSN: 1468-330X

DOI: 10.1136/jnnp.2007.126219

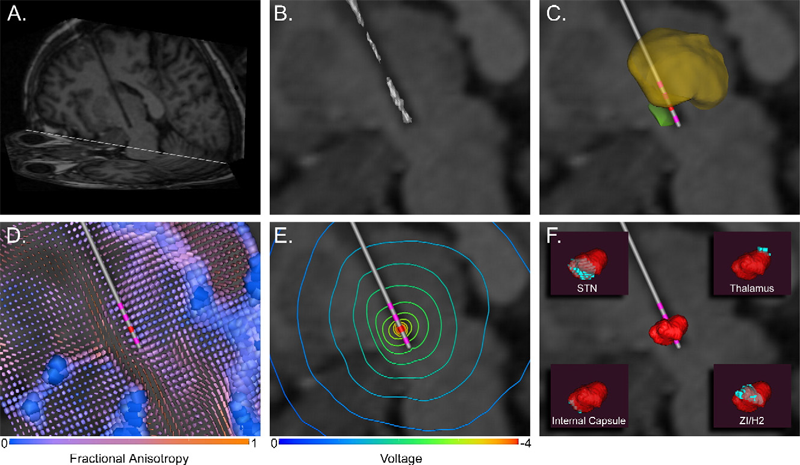

METHODS: Each patient specific model was created with a series of five steps: (1) definition of the neurosurgical stereotactic coordinate system within the context of preoperative imaging data; (2) entry of intraoperative microelectrode recording locations from neurophysiologically defined thalamic, subthalamic and substantia nigra neurons into the context of the imaging data; (3) fitting a three dimensional brain atlas to the neuroanatomy and neurophysiology of the patient; (4) positioning the DBS electrode in the documented stereotactic location, verified by postoperative imaging data; and (5) calculation of the VTA using a diffusion tensor based finite element neurostimulation model.

RESULTS: The patient specific models show that therapeutic benefit was achieved with direct stimulation of a wide range of anatomical structures in the subthalamic region. Interestingly, of the five patients exhibiting a greater than 40\% improvement in their Unified PD Rating Scale (UPDRS), all but one had the majority of their VTA outside the atlas defined borders of the STN. Furthermore, of the five patients with less than 40\% UPDRS improvement, all but one had the majority of their VTA inside the STN.

CONCLUSIONS: Our results are consistent with previous studies suggesting that therapeutic benefit is associated with electrode contacts near the dorsal border of the STN, and provide quantitative estimates of the electrical spread of the stimulation in a clinically relevant context.

Keywords: Brain Mapping, Brain Mapping: methods, Cerebral,Cerebral: physiology, Computer-Assisted, Computer-Assisted: methods, Deep Brain Stimulation, Deep Brain Stimulation: methods, Diffusion Magnetic Resonance Imaging, Diffusion Magnetic Resonance Imaging: methods, Dominance, Electrodes, Humans, Image Processing, Imaging, Implanted, Magnetic Resonance Imaging, Magnetic Resonance Imaging: methods, Nerve Net, Nerve Net: physiopathology, Neurologic Examination, Neurons, Neurons: physiology, Parkinson Disease, Parkinson Disease: physiopathology, Parkinson Disease: therapy, Substantia Nigra, Substantia Nigra: physiopathology, Subthalamic Nucleus, Subthalamic Nucleus: physiopathology, Synaptic Transmission, Synaptic Transmission: physiology, Thalamus, Thalamus: physiopathology, Three-Dimensional, Tomography, Treatment Outcome, X-Ray Computed, X-Ray Computed: methods

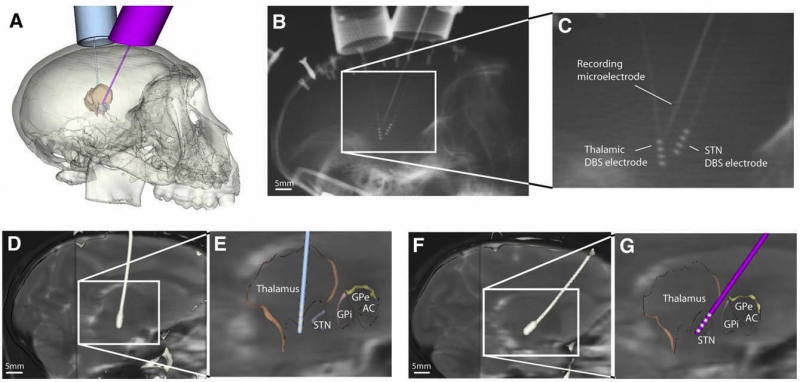

S. Miocinovic, S.F. Lempka, G.S. Russo, C.B. Maks, C.R. Butson, K.E. Sakaie, J.L. Vitek, C.C. McIntyre.

“Experimental and theoretical characterization of the voltage distribution generated by deep brain stimulation,” In Experimental Neurology, Vol. 216, No. 1, Elsevier Inc., pp. 166--176. March, 2009.

ISSN: 1090--2430

DOI: 10.1016/j.expneurol.2008.11.024

PubMed ID: 19118551

2008

C.R. Butson, G.A. Clark.

“Mechanisms of noise-induced improvement in light-intensity encoding in Hermissenda photoreceptor network,” In Journal of Neurophysiology, Vol. 99, No. 1, pp. 155--165. January, 2008.

ISSN: 0022-3077

DOI: 10.1152/jn.01250.2006

PubMed ID: 18003872

In a companion paper we showed that random channel and synaptic noise improve the ability of a biologically realistic, GENESIS-based computational model of the Hermissenda eye to encode light intensity. In this paper we explore mechanisms for noise-induced improvement by examining contextual spike-timing relationships among neurons in the photoreceptor network. In other systems, synaptically connected pairs of spiking cells can develop phase-locked spike-timing relationships at particular, well-defined frequencies. Consequently, domains of stability (DOS) emerge in which an increase in the frequency of inhibitory postsynaptic potentials can paradoxically increase, rather than decrease, the firing rate of the postsynaptic cell. We have extended this analysis to examine DOS as a function of noise amplitude in the exclusively inhibitory Hermissenda photoreceptor network. In noise-free simulations, DOS emerge at particular firing frequencies of type B and type A photoreceptors, thus producing a nonmonotonic relationship between their firing rates and light intensity. By contrast, in the noise-added conditions, an increase in noise amplitude leads to an increase in the variance of the interspike interval distribution for a given cell; in turn, this blocks the emergence of phase locking and DOS. These noise-induced changes enable the eye to better perform one of its basic tasks: encoding light intensity. This effect is independent of stochastic resonance, which is often used to describe perithreshold stimuli. The constructive role of noise in biological signal processing has implications both for understanding the dynamics of the nervous system and for the design of neural interface devices.

Keywords: Action Potentials, Action Potentials: physiology, Animals, Artifacts, Computer Simulation, Eye, Eye: cytology, Hermissenda, Hermissenda: physiology, Invertebrate, Invertebrate: physiology, Nerve Net, Nerve Net: physiology, Neurons, Neurons: physiology, Ocular, Ocular Physiological Phenomena, Ocular: physiology, Photic Stimulation, Photoreceptor Cells, Reaction Time, Reaction Time: physiology, Sensory Thresholds, Sensory Thresholds: physiology, Synaptic Transmission, Synaptic Transmission: physiology, Vision

C.R. Butson, G.A. Clark.

“Random noise paradoxically improves light-intensity encoding in Hermissenda photoreceptor network,” In Journal of Neurophysiology, Vol. 99, No. 1, pp. 146--154. January, 2008.

ISSN: 0022-3077

DOI: 10.1152/jn.01247.2006

PubMed ID: 18003873

Keywords: Action Potentials, Action Potentials: physiology, Animals, Artifacts, Computer Simulation, Eye, Eye: cytology, Hermissenda, Hermissenda: physiology, Invertebrate, Invertebrate: physiology, Nerve Net, Nerve Net: physiology, Neurons, Neurons: physiology, Ocular, Ocular Physiological Phenomena, Ocular: physiology, Photic Stimulation, Photoreceptor Cells, Reaction Time, Reaction Time: physiology, Sensory Thresholds, Sensory Thresholds: physiology, Synaptic Transmission, Synaptic Transmission: physiology, Vision

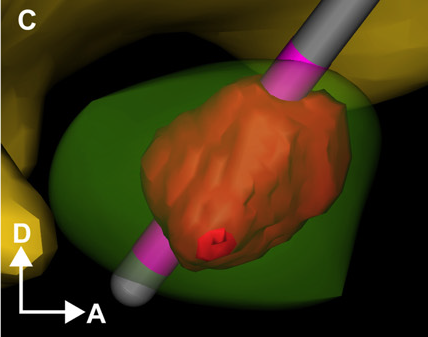

C.R. Butson, C.C. McIntyre.

“Current Steering to Control the Volume of Tissue Activated During Deep Brain Stimulation,” In Brain Stimulation, Vol. 1, No. 1, pp. 7--15. January, 2008.

DOI: 10.1016/j.brs.2007.08.004

PubMed ID: 19142235

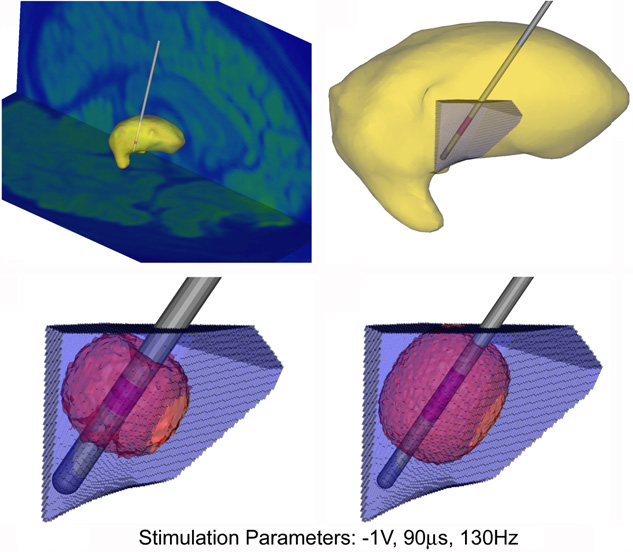

OBJECTIVE: The goals of this study were to develop a theoretical understanding of the effects of current steering in the context of DBS, and use that information to evaluate the potential utility of current steering during stimulation of the subthalamic nucleus.

METHODS: We used finite element electric field models, coupled to multi-compartment cable axon models, to predict the volume of tissue activated (VTA) by DBS as a function of the stimulation parameter settings.

RESULTS: Balancing current flow through adjacent cathodes increased the VTA magnitude, relative to monopolar stimulation, and current steering enabled us to sculpt the shape of the VTA to fit a given anatomical target.

CONCLUSIONS: These results provide motivation for the integration of current steering technology into clinical DBS systems, thereby expanding opportunities to customize DBS to individual patients, and potentially enhancing therapeutic efficacy.

2007

C.R. Butson, C.C. McIntyre.

“Differences among implanted pulse generator waveforms cause variations in the neural response to deep brain stimulation,” In Clinical Neurophysiology, Vol. 118, pp. 1889--1894. August, 2007.

ISSN: 1388-2457

DOI: 10.1016/j.clinph.2007.05.061

METHODS: We recorded waveforms from a broad range of stimulation parameter settings in each IPG model, and compared them to idealized waveforms that adhered to the parameters specified in the programming device. We then used a previously published computational model to predict the neural response to the various stimulation waveforms.

RESULTS: The stimulation waveforms produced by the IPGs differed from the idealized waveforms assumed in previous theoretical and clinical studies, and the waveforms differed among the IPG models. These differences were greater at higher frequencies and longer pulse widths, and caused variations of up to 0.4 V in activation thresholds for model axons located 3 mm from the DBS electrode contact.

CONCLUSIONS: The specific details of the stimulation waveform directly affect the neural response to DBS and should be accounted for in theoretical and experimental studies of DBS.

SIGNIFICANCE: While the clinical selection of DBS parameters is individualized to each patient based on behavioral outcomes, scientific analysis of stimulation parameter settings and clinical threshold measurements are subject to a previously unrecognized source of error unless the actual waveforms produced by the IPG are accounted for.

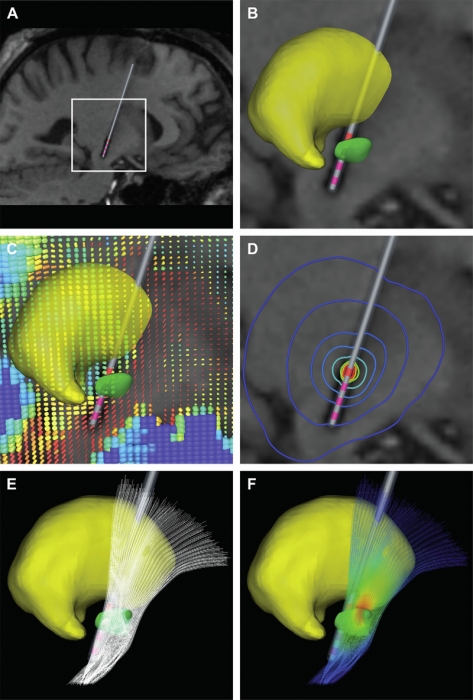

C.R. Butson, S.E. Cooper, J.M. Henderson, C.C. McIntyre.

“Patient-specific analysis of the volume of tissue activated during deep brain stimulation,” In NeuroImage, Vol. 34, No. 2, pp. 661--670. January, 2007.

ISSN: 1053-8119

DOI: 10.1016/j.neuroimage.2006.09.034

PubMed ID: 17113789

Keywords: Deep Brain Stimulation, Finite Element Analysis, Humans, Imaging, Three-Dimensional, Magnetic Resonance Imaging, Models, Neurological, Parkinson Disease, Parkinson Disease: therapy, Pyramidal Tracts, Pyramidal Tracts: physiology, Subthalamic Nucleus, Subthalamic Nucleus: physiology

2006

C.R. Butson, C.B. Maks, C.C. McIntyre.

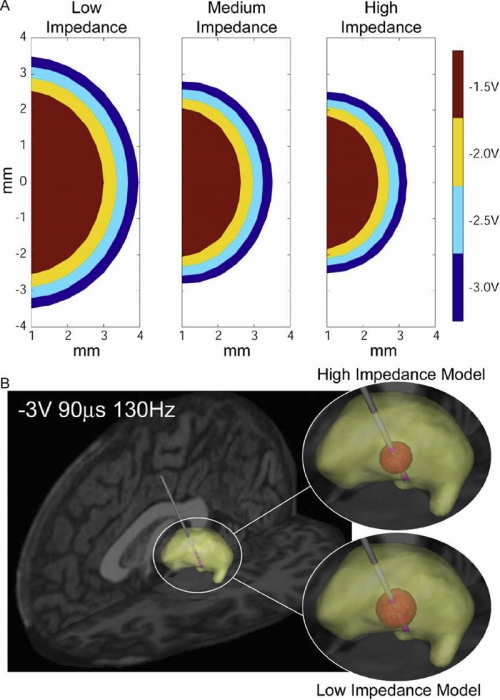

“Sources and effects of electrode impedance during deep brain stimulation,” In Clinical Neurophysiology, Vol. 117, No. 2, pp. 447--454. 2006.

DOI: 10.1016/j.clinph.2005.10.007

PubMed ID: 16376143

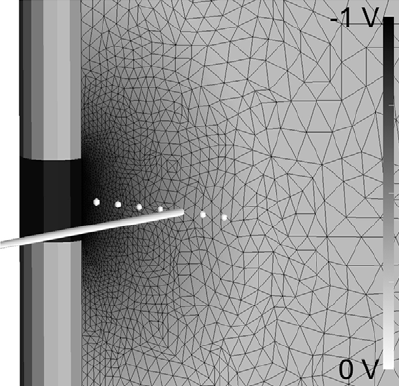

METHODS: Axisymmetric finite-element models (FEM) of the DBS system were constructed with explicit representation of encapsulation layers around the electrode and implanted pulse generator. Impedance was calculated by dividing the stimulation voltage by the integrated current density along the active electrode contact. The models utilized a Fourier FEM solver that accounted for the capacitive components of the electrode-tissue interface during voltage-controlled stimulation. The resulting time- and space-dependent voltage waveforms generated in the tissue medium were superimposed onto cable model axons to calculate the VTA.

RESULTS: The primary determinants of electrode impedance were the thickness and conductivity of the encapsulation layer around the electrode contact and the conductivity of the bulk tissue medium. The difference in the VTA between our low (790 Omega) and high (1244 Omega) impedance models with typical DBS settings (-3 V, 90 mus, 130 Hz pulse train) was 121 mm3, representing a 52\% volume reduction. CONCLUSIONS: Electrode impedance has a substantial effect on the VTA and accurate representation of electrode impedance should be an explicit component of computational models of voltage-controlled DBS.

SIGNIFICANCE: Impedance is often used to identify broken leads (for values > 2000 Omega) or short circuits in the hardware (for values during DBS.

Keywords: Brain, Brain: physiology, Computer Simulation, Deep Brain Stimulation, Electric Conductivity, Electric Impedance, Electrodes, Humans, Imaging, Models, Neurological, Three-Dimensional

C.R. Butson, C.C. McIntyre.

“Role of electrode design on the volume of tissue activated during deep brain stimulation,” In Journal of Neural Engineering, Vol. 3, No. 1, pp. 1--8. March, 2006.

ISSN: 1741-2560

DOI: 10.1088/1741-2560/3/1/001

PubMed ID: 16510937

Keywords: Animals, Brain, Brain: physiology, Computer Simulation, Computer-Aided Design, Deep Brain Stimulation, Deep Brain Stimulation: instrumentation, Deep Brain Stimulation: methods, Electrodes, Equipment Design, Equipment Design: methods, Equipment Failure Analysis, Equipment Failure Analysis: methods, Humans, Implanted, Microelectrodes, Models, Neurological, Neurons, Neurons: physiology, Organ Size, Organ Size: physiology

2005

C.R. Butson, C.C. McIntyre.

“Tissue and electrode capacitance reduce neural activation volumes during deep brain stimulation,” In Clinical Neurophysiology, Vol. 116, No. 10, pp. 2490--500. October, 2005.

DOI: 10.1016/j.clinph.2005.06.023

PubMed ID: 16125463

OBJECTIVE: The growing clinical acceptance of neurostimulation technology has highlighted the need to accurately predict neural activation as a function of stimulation parameters and electrode design. In this study we evaluate the effects of the tissue and electrode capacitance on the volume of tissue activated (VTA) during deep brain stimulation (DBS).

METHODS: We use a Fourier finite element method (Fourier FEM) to calculate the potential distribution in the tissue medium as a function of time and space simultaneously for a range of stimulus waveforms. The extracellular voltages are then applied to detailed multi-compartment cable models of myelinated axons to determine neural activation. Neural activation volumes are calculated as a function of the stimulation parameters and magnitude of the capacitive components of the electrode-tissue interface.

RESULTS: Inclusion of either electrode or tissue capacitance reduces the VTA compared to electrostatic simulations in a manner dependent on the capacitance magnitude and the stimulation parameters (amplitude and pulse width). Electrostatic simulations with typical DBS parameter settings (-3 V or -3 mA, 90 micros, 130 Hz) overestimate the VTA by approximately 20\% for voltage- or current-controlled stimulation. In addition, strength-duration time constants decrease and more closely match clinical measurements when explicitly accounting for the effects of voltage-controlled stimulation.

CONCLUSIONS: Attempts to quantify the VTA from clinical neurostimulation devices should account for the effects of electrode and tissue capacitance.

SIGNIFICANCE: DBS has rapidly emerged as an effective treatment for movement disorders; however, little is known about the VTA during therapeutic stimulation. In addition, the influence of tissue and electrode capacitance has been largely ignored in previous models of neural stimulation. The results and methodology of this study provide the foundation for the quantitative analysis of the VTA during clinical neurostimulation.

Keywords: Algorithms, Axons, Axons: physiology, Computer Simulation, Deep Brain Stimulation, Electric Capacitance, Electrodes, Extracellular Space, Extracellular Space: physiology, Finite Element Analysis, Implanted, Models, Myelinated, Myelinated: physiology, Nerve Fibers, Neurological, Neurons, Neurons: physiology, Poisson Distribution, Statistical

2001

C.R. Butson.

“From Action Potentials to Surface Potentials,” In Multilevel Neuronal Modeling Workshop, Edinburgh, Scotland, May, 2001.

C.R. Butson, G.A. Clark.

“Random Noise Confers a Paradoxical Improvement in the Ability of a Simulated Hermissenda Photoreceptor Network to Encode Light Intensity,” In Society for Neuroscience Conference, November, 2001.

L.M. Schultz, C.R. Butson, G.A. Clark.

“Post-light potentiation at type B to A photoreceptor connections in Hermissenda,” In Neurobiology of Learning and Memory, Vol. 76, No. 1, pp. 7--32. July, 2001.

Page 3 of 3