Image Analysis

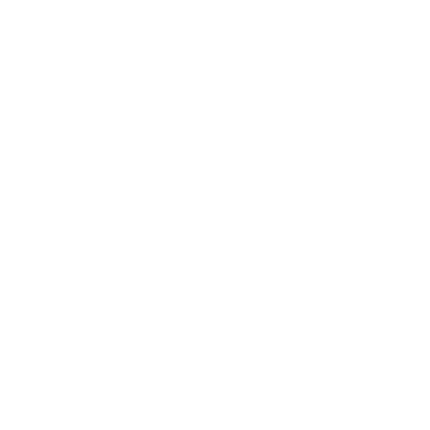

SCI's imaging work addresses fundamental questions in 2D and 3D image processing, including filtering, segmentation, surface reconstruction, and shape analysis. In low-level image processing, this effort has produce new nonparametric methods for modeling image statistics, which have resulted in better algorithms for denoising and reconstruction. Work with particle systems has led to new methods for visualizing and analyzing 3D surfaces. Our work in image processing also includes applications of advanced computing to 3D images, which has resulted in new parallel algorithms and real-time implementations on graphics processing units (GPUs). Application areas include medical image analysis, biological image processing, defense, environmental monitoring, and oil and gas.

Ross Whitaker

Segmentation

Chris Johnson

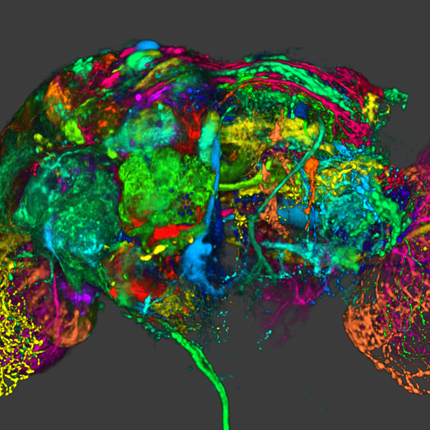

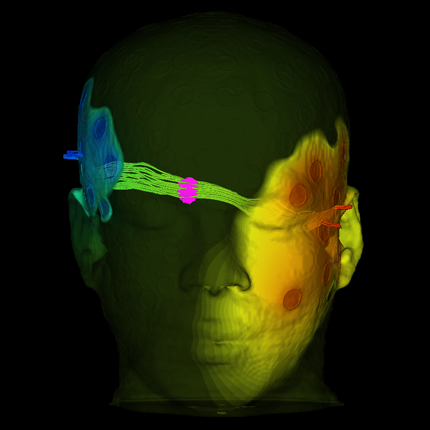

Diffusion Tensor AnalysisFunded Research Projects:

Publications in Image Analysis:

Classification of Mild Cognitive Impairment and Alzheimer's Disease Using Manual Motor Measures, V. Koppelmans, M.F.L. Ruitenberg, S.Y. Schaefer, J.B. King, J.M. Jacobo, B.P. Silvester, A.F. Mejia, J. van der Geest, J.M. Hoffman, T. Tasdizen, K. Duff. In Neurodegener Dis, 2024. DOI: 10.1159/000539800 PubMed ID: 38865972 Introduction: Manual motor problems have been reported in mild cognitive impairment (MCI) and Alzheimer's disease (AD), but the specific aspects that are affected, their neuropathology, and potential value for classification modeling is unknown. The current study examined if multiple measures of motor strength, dexterity, and speed are affected in MCI and AD, related to AD biomarkers, and are able to classify MCI or AD. Methods: Fifty-three cognitively normal (CN), 33 amnestic MCI, and 28 AD subjects completed five manual motor measures: grip force, Trail Making Test A, spiral tracing, finger tapping, and a simulated feeding task. Analyses included: 1) group differences in manual performance; 2) associations between manual function and AD biomarkers (PET amyloid β, hippocampal volume, and APOE ε4 alleles); and 3) group classification accuracy of manual motor function using machine learning. Results: amnestic MCI and AD subjects exhibited slower psychomotor speed and AD subjects had weaker dominant hand grip strength than CN subjects. Performance on these measures was related to amyloid β deposition (both) and hippocampal volume (psychomotor speed only). Support vector classification well-discriminated control and AD subjects (area under the curve of 0.73 and 0.77 respectively), but poorly discriminated MCI from controls or AD. Conclusion: Grip strength and spiral tracing appear preserved, while psychomotor speed is affected in amnestic MCI and AD. The association of motor performance with amyloid β deposition and atrophy could indicate that this is due to amyloid deposition in- and atrophy of motor brain regions, which generally occurs later in the disease process. The promising discriminatory abilities of manual motor measures for AD emphasize their value alongside other cognitive and motor assessment outcomes in classification and prediction models, as well as potential enrichment of outcome variables in AD clinical trials. |

Morphology of uranium oxides reduced from magnesium and sodium diuranate, A.M. Chalifoux, L. Gibb, K.N. Wurth, T. Tenner, T. Tasdizen, L. MacDonald. In Radiochimica Acta, Vol. 112, No. 2, pp. 73-84. 2024. Morphological analysis of uranium materials has proven to be a key signature for nuclear forensic purposes. This study examines the morphological changes to magnesium diuranate (MDU) and sodium diuranate (SDU) during reduction in a 10 % hydrogen atmosphere with and without steam present. Impurity concentrations of the materials were also examined pre and post reduction using energy dispersive X-ray spectroscopy combined with scanning electron microscopy (SEM-EDX). The structures of the MDU, SDU, and UO x samples were analyzed using powder X-ray diffraction (p-XRD). Using this method, UO x from MDU was found to be a mixture of UO2, U4O9, and MgU2O6 while UO x from SDU were combinations of UO2, U4O9, U3O8, and UO3. By SEM, the MDU and UO x from MDU had identical morphologies comprised of large agglomerates of rounded particles in an irregular pattern. SEM-EDX revealed pockets of high U and high Mg content distributed throughout the materials. The SDU and UO x from SDU had slightly different morphologies. The SDU consisted of massive agglomerates of platy sheets with rough surfaces. The UO x from SDU was comprised of massive agglomerates of acicular and sub-rounded particles that appeared slightly sintered. Backscatter images of SDU and related UO x materials showed sub-rounded dark spots indicating areas of high Na content, especially in UO x materials created in the presence of steam. SEM-EDX confirmed the presence of high sodium concentration spots in the SDU and UO x from SDU. Elemental compositions were found to not change between pre and post reduction of MDU and SDU indicating that reduction with or without steam does not affect Mg or Na concentrations. The identification of Mg and Na impurities using SEM analysis presents a readily accessible tool in nuclear material analysis with high Mg and Na impurities likely indicating processing via MDU or SDU, respectively. Machine learning using convolutional neural networks (CNNs) found that the MDU and SDU had unique morphologies compared to previous publications and that there are distinguishing features between materials created with and without steam. |

Examining the role of passive design indicators in energy burden reduction: Insights from a machine learning and deep learning approach S. Ghorbany, M. Hu, S. Yao, C. Wang, Q.C. Nguyen, X. Yue, M. Alirezaei, T. Tasdizen, M Sisk. In Building and Environment, Elsevier, 2024. Passive design characteristics (PDC) play a pivotal role in reducing the energy burden on households without imposing additional financial constraints on project stakeholders. However, the scarcity of PDC data has posed a challenge in previous studies when assessing their energy-saving impact. To tackle this issue, this research introduces an innovative approach that combines deep learning-powered computer vision with machine learning techniques to examine the relationship between PDC and energy burden in residential buildings. In this study, we employ a convolutional neural network computer vision model to identify and measure key indicators, including window-to-wall ratio (WWR), external shading, and operable window types, using Google Street View images within the Chicago metropolitan area as our case study. Subsequently, we utilize the derived passive design features in conjunction with demographic characteristics to train and compare various machine learning methods. These methods encompass Decision Tree Regression, Random Forest Regression, and Support Vector Regression, culminating in the development of a comprehensive model for energy burden prediction. Our framework achieves a 74.2 % accuracy in forecasting the average energy burden. These results yield invaluable insights for policymakers and urban planners, paving the way toward the realization of smart and sustainable cities. |

Improving uranium oxide pathway discernment and generalizability using contrastive self-supervised learning J Johnson, L McDonald, T Tasdizen. In Computational Materials Science, Vol. 223, Elsevier, 2024. In the field of Nuclear Forensics, there exists a plethora of different tools to aid investigators when performing analysis of unknown nuclear materials. Many of these tools offer visual representations of the uranium ore concentrate (UOC) materials that include complimentary and contrasting information. In this paper, we present a novel technique drawing from state-of-the-art machine learning methods that allows information from scanning electron microscopy images (SEM) to be combined to create digital encodings of the material that can be used to determine the material’s processing route. Our technique can classify UOC processing routes with greater than 96% accuracy in a fraction of a second and can be adapted to unseen samples at similarly high accuracy. The technique’s high accuracy and speed allow forensic investigators to quickly get preliminary results, while generalization allows the model to be adapted to new materials or processing routes quickly without the need for complete retraining of the model. |

HistoEM: A Pathologist-Guided and Explainable Workflow Using Histogram Embedding for Gland Classification, A. Ferrero, E. Ghelichkhan, H. Manoochehri, M.M. Ho, D.J. Albertson, B.J. Brintz, T. Tasdizen, R.T. Whitaker, B. Knudsen. In Modern Pathology, Vol. 37, No. 4, 2024. Pathologists have, over several decades, developed criteria for diagnosing and grading prostate cancer. However, this knowledge has not, so far, been included in the design of convolutional neural networks (CNN) for prostate cancer detection and grading. Further, it is not known whether the features learned by machine-learning algorithms coincide with diagnostic features used by pathologists. We propose a framework that enforces algorithms to learn the cellular and subcellular differences between benign and cancerous prostate glands in digital slides from hematoxylin and eosin–stained tissue sections. After accurate gland segmentation and exclusion of the stroma, the central component of the pipeline, named HistoEM, utilizes a histogram embedding of features from the latent space of the CNN encoder. Each gland is represented by 128 feature-wise histograms that provide the input into a second network for benign vs cancer classification of the whole gland. Cancer glands are further processed by a U-Net structured network to separate low-grade from high-grade cancer. Our model demonstrates similar performance compared with other state-of-the-art prostate cancer grading models with gland-level resolution. To understand the features learned by HistoEM, we first rank features based on the distance between benign and cancer histograms and visualize the tissue origins of the 2 most important features. A heatmap of pixel activation by each feature is generated using Grad-CAM and overlaid on nuclear segmentation outlines. We conclude that HistoEM, similar to pathologists, uses nuclear features for the detection of prostate cancer. Altogether, this novel approach can be broadly deployed to visualize computer-learned features in histopathology images. |

A Longitudinal Analysis of Pre-and Post-Operative Dysmorphology in Metopic Craniosynostosis J.W. Beiriger, W. Tao, Z. Irgebay, J. Smetona, L. Dvoracek, N. Kass, A. Dixon, C. Zhang, M. Mehta, R. Whitaker, J. Goldstein. In The Cleft Palate Craniofacial Journal, Sage, 2024. DOI: 10.1177/10556656241237605 ObjectiveThe purpose of this study is to objectively quantify the degree of overcorrection in our current practice and to evaluate longitudinal morphological changes using CranioRateTM, a novel machine learning skull morphology assessment tool.

DesignRetrospective cohort study across multiple time points.

SettingTertiary care children's hospital.

PatientsPatients with preoperative and postoperative CT scans who underwent fronto-orbital advancement (FOA) for metopic craniosynostosis.

Main Outcome MeasuresWe evaluated preoperative, postoperative, and two-year follow-up skull morphology using CranioRateTM to generate a Metopic Severity Score (MSS), a measure of degree of metopic dysmorphology, and Cranial Morphology Deviation (CMD) score, a measure of deviation from normal skull morphology.

ResultsFifty-five patients were included, average age at surgery was 1.3 years. Sixteen patients underwent follow-up CT imaging at an average of 3.1 years. Preoperative MSS was 6.3 ± 2.5 (CMD 199.0 ± 39.1), immediate postoperative MSS was −2.0 ± 1.9 (CMD 208.0 ± 27.1), and longitudinal MSS was 1.3 ± 1.1 (CMD 179.8 ± 28.1). MSS approached normal at two-year follow-up (defined as MSS = 0). There was a significant relationship between preoperative MSS and follow-up MSS (R2 = 0.70).

ConclusionsMSS quantifies overcorrection and normalization of head shape, as patients with negative values were less “metopic” than normal postoperatively and approached 0 at 2-year follow-up. CMD worsened postoperatively due to postoperative bony changes associated with surgical displacements following FOA. All patients had similar postoperative metopic dysmorphology, with no significant association with preoperative severity. More severe patients had worse longitudinal dysmorphology, reinforcing that regression to the metopic shape is a postoperative risk which increases with preoperative severity.

|