Events on March 27, 2023

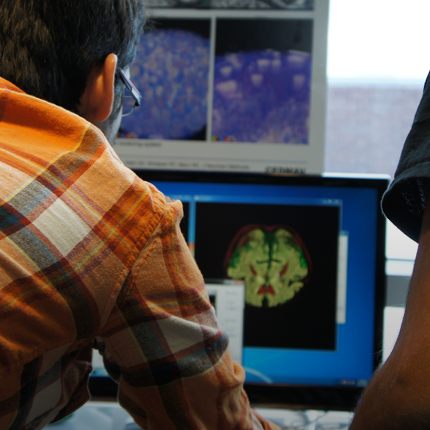

William Hsu, Associate Professor, Radiological Sciences, UCLA Presents:

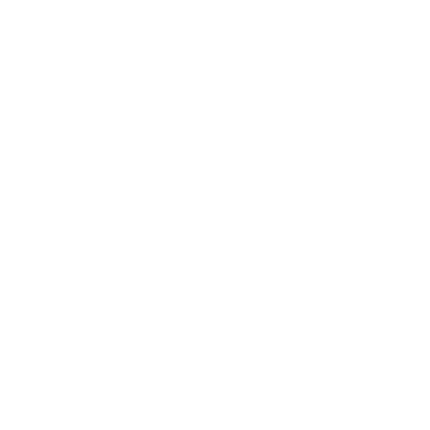

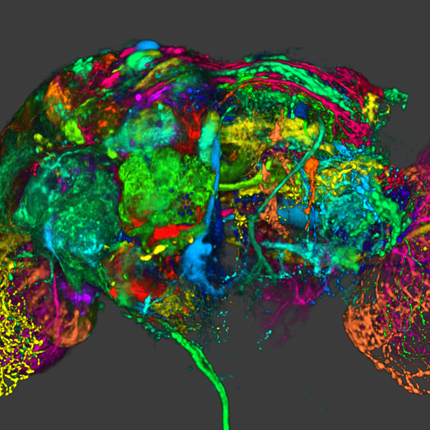

Computational Approaches for Integrating Multimodal Data Towards Precision Oncology

March 27, 2023 at 10:00am for 1hr

Evans Conference Room, WEB 3780

or click here for zoom link

https://sciinstitute.zoom.us/j/89906052840 Passcode: 537012Abstract:

In the current data-rich healthcare environment, our capacity to collect vast amounts of longitudinal, multimodal data needs to be matched with a comparable ability to continuously learn from the data and tailor clinical decisions to an individual. For complex and highly heterogeneous diseases such as cancer, harnessing complementary information from clinical, radiologic, pathologic, and molecular data can potentially uncover biomarkers of aggressive disease. Precision oncology aims to integrate and reason upon this multimodal data to provide physicians with personalized and actionable information when diagnosing and treating cancer. However, learning meaningful insights from this data is challenging, given its unstructured and observational nature. In this talk, I will present my work applying informatics principles and machine learning techniques to acquire, integrate, and learn from multimodal datasets to improve cancer detection and diagnosis. I will discuss methods to manage data heterogeneity, interpret what models have learned, and validate models across different populations.

Bio:

William Hsu is an Associate Professor in the Department of Radiological Sciences and a member of the Medical Imaging & Informatics group. He received his Ph.D. in Biomedical Engineering with an emphasis in Medical Imaging Informatics from the University of California, Los Angeles in 2009 and a BS degree in Biomedical Engineering from the Johns Hopkins University in 2004. His research interests include data integration, predictive modeling, population health management, and imaging informatics. He is an active member of the American Medical Informatics Association, serving on the Working Group Steering Committee and as a leader in the Biomedical Imaging Informatics Working Group.

Posted by: Deb Zemek

Jadie Adams Presents:

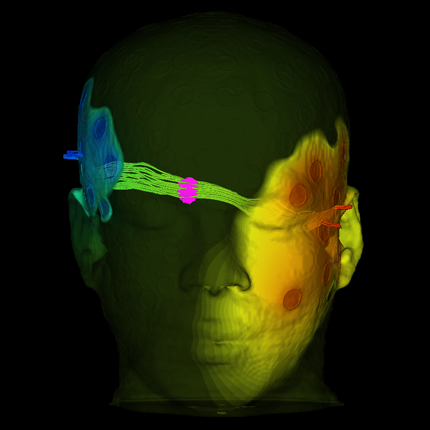

Can point cloud networks learn statistical shape models of anatomies?

March 27, 2023 at 12:00pm for 1hr

Evans Conference Room, WEB 3780

Warnock Engineering Building, 3rd floor.

Posted by: Mitra Alirezaei