Events on April 21, 2021

Tom Peterka, Computer Scientist at Argonne National Laboratory Presents:

Scalable High-Performance Analysis of Scientific Data

April 21, 2021 at 12:00pm for 1hr

https://utah.zoom.us/j/92606341957 , password: vis-sem

Abstract:

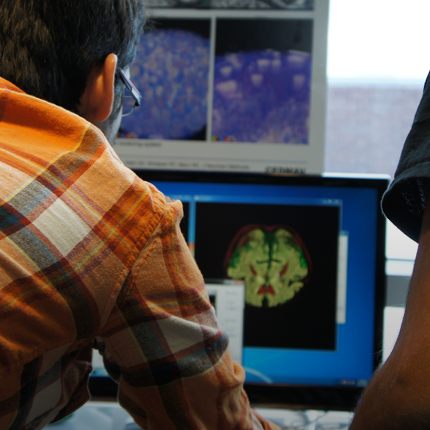

My team studies the use of supercomputers, besides their traditional role of simulation and modeling, for the analysis and visualization of scientific data. We analyze data from scientific instruments and computer simulations generated by some of the largest science facilities in the world. Our strategy is three-layered: to develop scalable software infrastructure, to build scalable algorithms on this foundation, and to engage with applications to drive further development. In this talk, I’ll present examples of all three levels, with libraries and middleware for the rapid development of scalable analysis algorithms and coupling them into in situ workflows, efficient parallel algorithms to handle high-volume and high-velocity data streams, and applications such as high-energy physics, materials science, and environmental science.

Bio:

Tom Peterka is a computer scientist at Argonne National Laboratory, scientist at the University of Chicago Consortium for Advanced Science and Engineering (CASE), and fellow of the Northwestern Argonne Institute for Science and Engineering (NAISE). His research interests are large-scale parallel in situ analysis of scientific data. Recipient of the 2017 DOE early career award and five best paper awards, Peterka has published over 100 peer-reviewed articles and papers since earning his Ph.D. in computer science from the University of Illinois at Chicago in 2007.

Posted by: Sudhanshu Sane