I started off rendering this on just one computer 30 hours before it was due, but as the deadline loomed nearer and nearer I added more and more computers, so that in the end I had around 15 computers rendering different parts of the scene (with some of them overlapping since I patched things together). I then created a script to run the convert tool on all the separate images and combine them into a final image. I used a cron job to run the script periodically so that I could track the progress of my image from the web. Yeah, it's ugly, but I didn't have access to a cluster and I hadn't coded in support for parallization, so my poor man's renderer had to do. 36 hours after I started I had a complete image.

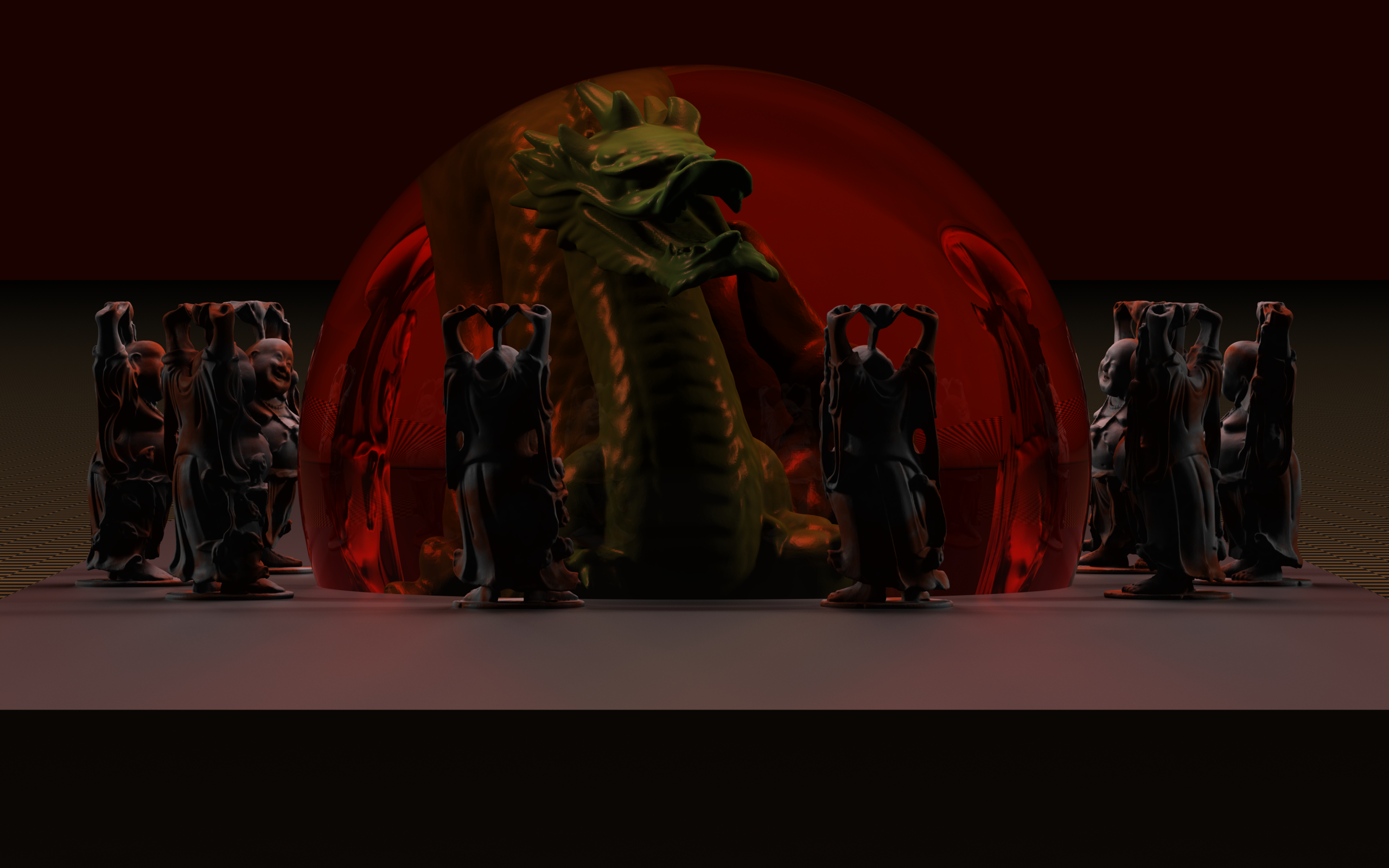

I used 225 samples per pixel with a Sinc filter of support 3. Million triangle models of the Stanford Buddha and Dragon (each in their own acceleration grid structure), and then instanced the Buddha grid to create the other Buddhas. The dragon is inside a red dielectric sphere using beer's law. The floor is a checkerboard pattern and the models are on top of a box; unfortunately, the front of the box is almost completely black since I had turned down the ambient light in order to show off my area lights, thus making it look like I hadn't rendered the bottom part of the image since all the lights are behind the front face. Too late to fix that...